Powerful AI models that can realistically simulate the style of virtually any artist have many in the art world worried. In fact, some artists believe the technology has for them become an existential threat.

Popular apps such as Prisma Labs’ Lensa enable users to upload selfies and then recreate those images into “magical avatars” in a vast array of different styles.

But according to Ben Zhao, professor of computer science at the University of Chicago, these kind of AI art generators are now threatening to put real artists out of work.

Zhao said his team came to this issue almost accidentally in 2020 after working on ways to protect people from facial recognition software. As a result of that work being publicized, artists began to approach Zhao to see if there was a way to protect their work and artistic style from being plagiarized.

Professional artists were very concerned about being displaced by these AI models, while art students were literally dropping out of art school because they saw no future in the profession.

“They were all basically almost resigned to the fact that they were going to lose their jobs and this entire industry was going to disappear,” Zhao said. “And that was really shocking because I think none of us understood the extent of the impact of these models and just how dramatic the effect they had on these artists.”

That was really a wakeup call.

“I don’t know that I’ve ever seen the impact of technology hit that hard in such a short amount of time,” Zhao said. “So that’s where we started working with artists to try to see if we could help. AI is coming to do a lot of things, and that may not be bad, but as far as actual individual mimicry of individual artists, that I think was a really clear line that’s being crossed in terms of ethics.”

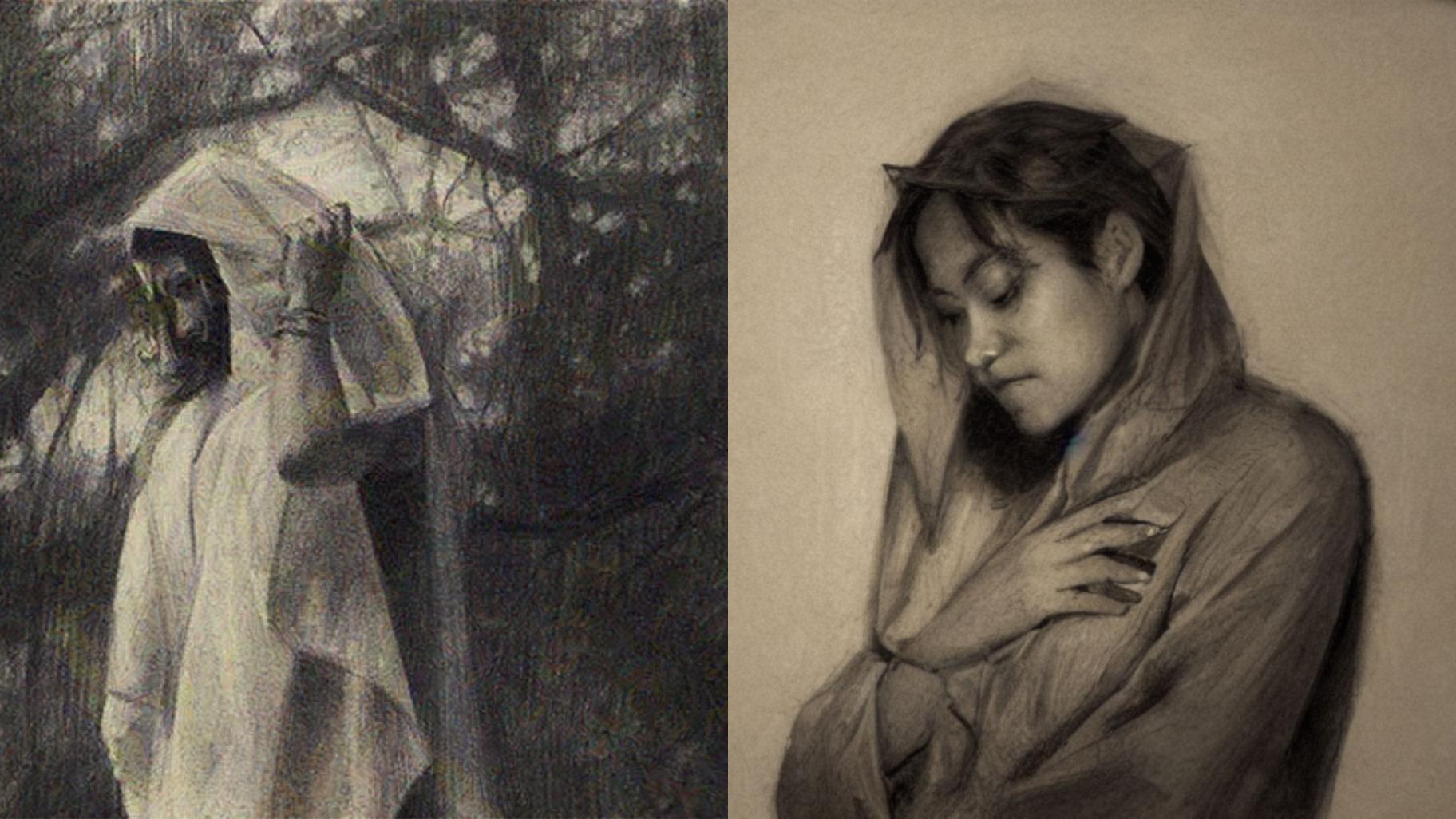

The image on the left is by artist Karla Ortiz; the image on the right was generated by AI mimicking Ortiz’s artistic style. (Courtesy Ben Zhao / University of Chicago)

The image on the left is by artist Karla Ortiz; the image on the right was generated by AI mimicking Ortiz’s artistic style. (Courtesy Ben Zhao / University of Chicago)

What Zhao’s team has done in response is create Glaze, a digital form of protection that prevents AI models from accurately copying an artist’s style. The important thing to note is that the changes Glaze makes to a digital image are not discernible to the human eye, but they completely confuse the AI models so that they are unable to duplicate an artist’s style.

Zhao said it works because AI doesn’t look at art in the same way that humans do. He said it’s almost akin to looking at things in different dimensions.

“What we are able to do is understand that particular dimension that these AI models are viewing these images through … and we’re able to distort things in that dimension that do not translate into distortions in our visual dimension,” Zhao said.

While Zhao’s team’s Glaze protection is not a permanent fix to this problem because the AI technology is always improving, Zhao said what it does do is “raise the bar and make it significantly more difficult” to copy an artist’s work.

But in the longer term, this is something that Zhao believes will require policy and regulatory changes and legal means to fix.

Already several artists have filed lawsuits going after the companies that have created AI models that are now being used to copy their artistic style.

A new class action lawsuit filed this week in Illinois accuses Lensa creator Prisma Labs of violating the state’s Biometric Information Privacy Act.

According to a statement from the plaintiff’s lawyers, what many Lensa users probably don’t know is that in downloading the app “users must give the company access to every photo on their device.”

The company then uses the billions of images it captures to train its AI networks, the statement said, “by exploiting millions of users’ unique facial geometry.”

“Prisma Labs has been unlawfully collecting users’ biometric data without their consent,” said attorney Mike Kanovitz, a partner at the civil rights law firm Loevy & Loevy. “This is in violation of Illinois law and deeply concerning for anyone who believes in data privacy.”